Managing Swapfiles and Pagefiles can be confusing in VMware or Hyper-V because there are multiple files to configure at different levels.

- Guest VM's native OS swapfile

- Host related swapfile

- Hypervisor's per-guest VM swapfile

1) Guest VM's native OS swapfile

Even though you may assign 4 GB of RAM to a virtual machine, the OS running in that system may choose to create it's own pagefile on whatever virtual disks you provide according to its native behavior based on the full amount of "physical" RAM that it sees. The Hypervisor has no role in if or how a guest OS chooses to build or use a swapfile. This must be completely managed from within the Guest OS. However, remember that as a virtualization administrator you could certainly create a dedicated virtual hard drive file that is placed on a fast storage tier and then within the Guest OS ensure that this disk is used for paging.2) Host related swapfile

Just like the guest OS, your virtualization host has a swapfile or pagefile to support not having enough memory to perform its duties associated with being a virtualization infrastructure: (provisioning new VMs, vMotioning, etc)2a) VMWare System Swapfile using the Web Client: This is done via Hosts and Clusters -> Host -> Manage Tab -> Settings SubTab -> System Swap

|

| vCenter Server Web Client configuring Host System Swap File |

Edit System Swap Settings:

- Enabled - if unchecked = ALL RAM ALL THE TIME - NO SWAPFILE!

- The Datastore - Use a specific datastore

- Host Cache - use part of the host cache.

- Preferred swap file location - Use the host's preferred swap file location

2b) Windows Server with Hyper-V: this is managed via the system control panel -> Advanced Tab -> Performance -> Settings... -> Advanced Tab -> Virtual Memory -> Change... 3) VM related swap files.

|

| Server 2012 R2 Hyper-V server configuring Host PageFile |

- If all page files are removed = ALL RAM ALL THE TIME - NO SWAPFILE!

- Don't forget to click "SET" after configuring a pagefile on a disk, otherwise nothing happened.

Hyper-V Server or Windows Server Server Core Host Swapfile

Modification is done by script or command line entries:

Add a 2 Gb Pagefile:

wmic pagefileset create name="E:\pagefile.sys"

wmic pagefileset where name="E:\\pagefile.sys" set InitialSize=2048,MaximumSize=2048

Delete a pagefile:

wmic pagefileset where name="C:\\pagefile.sys" delete

3) Hypervisor's per-guest VM Swapfile

Modern hypervisors create a temporary swap file for hypervisor related memory management needs. This is NOT a swapfile that the Guest OS could ever see or use for its own swapfile needs! However, you may want this to be placed on an efficient disk to ensure that VMs boot as efficiently as possible. There are some key differences in how ESXi and Hyper-V use these files.VMWare:

The host creates VMX swap files automatically, provided there is sufficient free disk space at the time a virtual machine is powered on. If it cannot be created, it prevents power on!

This file is used because while physical memory is reserved for the system at powered on, memory for needs like the virtual machine monitor (VMM) and virtual devices can be swapped after initialization. The VMX swap feature means that a the 50MB+ for live VMX memory needs can shrink to only 10MB, freeing up memory resources for other needs. This is critical in overcommitted memory situations.

By default, the swap file is created in the same location as the virtual machine's configuration file, but you may change the swapfile datastore datastore to another shared storage location. Moving the swapfile to a local datastore may improve local performance, but it may also slow vMotions later because pages swapped to a local swap file on the source host must be transferred across the network to the destination host

Hyper-V:

Smart Paging in Hyper-V is used only when the following is true:

- The VM is being restarted (directly or via a host restart)

- The hypervisor discovers that there is no available RAM

- No memory can currently be reclaimed any other VMs running on the host

At that time the Smart Paging file is used by the VM as memory to complete startup. Within 10 minutes, that memory mapped to the Smart Paging will need to be provisioned into RAM and the the Smart Paging file will be deleted. The Smart Paging feature is ONLY to provide reliable restarts (not cold boot or running out of RAM later) of VMs

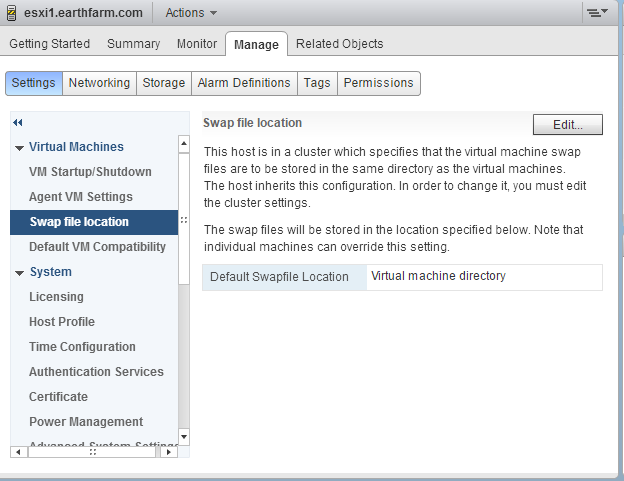

3a) How to configure the vSphere web client to configure VM support swapfile: Hosts & Clusters -> Host -> Manage Tab -> Settings SubTab -> Virtual Machines -> Swap File Location

|

| vSphere Web client configuring Default Swap File Location |

vSphere Desktop Client to configure VM support swapfile: Select Host -> Configuration Tab -> Software Group - Virtual Machine Swapfile Location

This setting can also be overridden on a per-VM basis if needed in the VM Swapfile Location property

3b) Hyper-V SmartPage: Select a VM -> Right Click (Or Actions) - Settings -> Smart Paging File Location

The file location is determined during installation, then reconfigured on a VM by VM basis in their management related properties:

I hope that you feel a little more solid on the 3 types of swapfiles that you will run into when managing virtualization!

Keep it virtual!